The process of migration is closely tied with a set of specific adaptions to cope with the new ecosystem. Transformation is a part of any organic migration process and bears many challenges. Today’s data-driven business ecosystems are also not able to overlook the data migration challenges.

Data

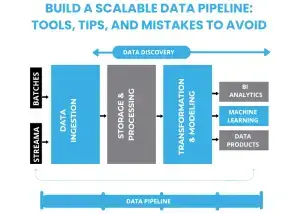

In a Data Pipeline, data ETL (Extracting, Transforming and Loading) is one of the essential components.

If the new environment for the data usage is different from the data source platform, data transformation is necessary. In a practical sense, data transformation is a process of changing the form of the data by using a specific program, which not just comprehends the original language of the data, but also decides which language the data should be translated to fit the new data environment and extracting the best value out of it. There are two critical phases of data transformation, i.e., data mapping and code generation through which a specific executable program containing data map specifications is developed and run on the systems to aid the conversion of the data.

While a various data technologies prevail in the data world, several substantial challenges about data transformation and ETL as a process still exist. We would classify these challenges into two types: (i) Tactical and (ii) Strategic

Tactical challenges:

- In an ETL cycle, mapping and program-based transformation of the data from one system to another and laying out necessary conversions for a proper data use is a task no less than a challenge in itself!

- All the transformations need to undergo a series of different tests to ensure that only useful and meaningful data reaches the final data warehouse. These tests are often more time-consuming and less effective, especially for the big data sets.

- Placing data from different sources to a destination system or data warehouse suffers from a number of limitations. The process is tedious; there are high chances of data loss and corruption, and data verification is not possible in most cases.

Strategic challenges:

- Buy vs. Build Open-source decision for ETL process

Data transformation is possible with development and application of the appropriate programs. Developers can carry over this task. However, they are seldom connected with the final business decision-making process and therefore the entire process of writing, maintaining, modifying, and supporting ETL programs become hazy and less value-deriving with useless iterations. Rather than self-building, adopting various open-source or enterprise-level ETL tools can be a mindful, sustainable, and savior approach for most of the business scenarios. While the formats, scale, and speed of the data change over time, the choice of tools and other preferences is crucial for making the ETL cycle successful and should be dealt with caution, right from the beginning.

- Dealing with the data from different sources

Natural enough, organizations can have numerous references to having various sets of data collected. While most of the data warehouses can perform data modeling for making the most informed decisions, it is necessary to remove discrepancies between data from different sources. Data needs to be thoroughly cleansed and normalized before sending them to the warehouse, to minimize or eliminate the scope of garbage data and most importantly, make different data strings work together in sync, and make the outcomes more consistent, meaningful and valuable for the users.

- Business v/s Technology priorities

Before beginning ETL process, it is essential to understand the business requirements and then align the appropriate ETL tools accordingly. What makes sense is to understand what the business wants to yield from the data and then decide about the approach and relevant tools, to make the most value out of the data and to allow an adjustment margin to accommodate the future data needs.

- Developing a sustainable data pipeline

A successful end-to-end data pipeline has many integration points, and components and they are hard to forecast right before actually breaking in the process. All the elements in the ETL architecture need to be based on flexible and independent technical decisions so that each of them become easily replaceable to adjust the changes in the data needs concerning the future scalability, functionality, and maintenance, without really affecting the entire pipeline. This requires a sound and strategic knowledge about various tools and their pros and cons.

- Establishing reliable predictions regarding the data needs

Predicting the future data volume is one of the hardest tasks in a sustainable data pipeline process. The growth plans of the organization make up the independent and most influencing source for predicting the scale and volume of the data the business would need to process on. It is a highly demanding task to follow a decision on data predictions, data transportation needs and establishing an ETL flow which can handle a dramatic change in the scale at which data incurs.

Data is a lifeline for any business today, however, bringing a self-sustaining and far-sighted approach for creating a meaning data pipeline has no alternative. Businesses must keep this reality in check to arrive at a valuable output from the ocean of data they are sailing through.

The team at Cruz Street Digital is well equipped to help by providing a “CDO on Demand” or essentially a fractional executive service who’s job is to audit data, technology, and people at your organization necessary to execute the strategy you want. Our team will help you both with a strategic road map and with data architecture, data acquisition, solution negotiation and more. Contact us today for an expert consultation!